Priority queues in Python

In the previous article I noted that Python’s heapq module is the only part of standard Python I could think of which deals with sorting, but which doesn’t give you full control over the sort order.

That means you need to take care when using a heapq as a priority queue.

For example, the A* search algorithm is a best first path finder. It maintains a priority queue of possible steps to take, ordered by an estimate of the total distance of the path routed through these steps. At each stage it pops the next step — the one with the shortest estimated total distance — from the queue, then updates based on the new position.

import heapq

def a_star(start, heuristic, moves):

"""A* graph search

- start: initial state

- heuristic(s): estimates path length from state s to finish.

heuristic(s) == 0 => finished

- moves(s): neighbours of s

Returns the shortest path to the finish and its cost, on success.

None otherwise.

"""

costs = {start: 0}

prev = {start: None}

frontier = [(heuristic(start), start)]

heapq.heapify(frontier)

def path(s):

return [] if s is None else path(prev[s]) + [s]

while frontier:

priority, state = heapq.heappop(frontier)

if heuristic(state) == 0:

return costs[state], path(state)

for n in moves(state):

n_cost = costs[state] + 1

if n not in costs or n_cost < costs[n]:

costs[n] = n_cost

heapq.heappush(frontier, (n_cost + heuristic(n), n))

prev[n] = state

The code above is a Python A* implementation. For simplicity, we’ll assume the cost of each step is 1. It’s easy enough to adapt the function if this isn’t the case.

The priority queue here is named frontier, the collection of states

we need to explore. The sequence heapify, heappop, heappush

maintains the priority ordering. (The call to heapify isn’t even

needed since a list with one element is already a heap.) So, each

time we pop a state from the queue, we get the one with the lowest

estimated cost. Then, based on the moves we can make from this new

step, we update our internal records of costs and previous nodes.

Note that the items on the queue are (cost, state) pairs. The costs

will be numbers, typically positive integers — the exact values depend

on the heuristic function.

Exactly what gets used as state is up to the caller

which supplies start, the initial state, and moves, which steps

from a state to its neighbours.

However, if items on the queue are tied on cost, the heapq may need to

compare state values. If the states have no defined ordering

this results in a runtime error.

>>> class State: pass

...

>>> x1, x2 = State(), State()

>>> (1, x1) < (2, x2)

True

>>> (1, x1) < (1, x2)

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

TypeError: '<' not supported between instances of 'State' and 'State'

We cannot supply a sort key to the heapq functions. Does this mean

clients must ensure state objects — whatever they actually are — can be

compared? As the code stands, yes, but the module documentation has advice on handling this situatation:

[…] store entries as 3-element list including the priority, an entry count, and the task. The entry count serves as a tie-breaker so that two tasks with the same priority are returned in the order they were added. And since no two entry counts are the same, the tuple comparison will never attempt to directly compare two tasks.

This strategy gives a more usable a_star.

import heapq

import itertools

def a_star(start, heuristic, moves):

costs = {start: 0}

prev = {start: None}

seq = itertools.count().__next__

frontier = [(heuristic(start), seq(), start)]

def path(s):

return [] if s is None else path(prev[s]) + [s]

while frontier:

_, _, state = heapq.heappop(frontier)

if heuristic(state) == 0:

return costs[state], path(state)

for n in moves(state):

n_cost = costs[state] + 1

if n not in costs or n_cost < costs[n]:

costs[n] = n_cost

heapq.heappush(frontier, (n_cost + heuristic(n), seq(), n))

prev[n] = state

Binary search gets a sort key

Suppose you have an list of distinct elements which has been sorted and rotated. How would you look up an element within that list?

For example, the list:

[7, 11, 13, 19, 2, 3, 5]

is sorted (the first 7 primes, in order) and rotated (to put 7 first).

With this list as input, then:

- look up

13returns2since13is at index2 - look up

2returns4 - look up

4returns the sentinel value-1

The obvious technique is to just search the list:

def lookup(values, v):

try:

return values.index(v)

except IndexError:

return -1

This is a linear algorithm which processes the entire list. Is there a way to take advantage of its sorted+rotated-ness?

If the list was sorted then we could apply a binary search for a logarithmic lookup. And in fact, by applying a custom ordering, the list is sorted.

How can we apply a custom ordering in Python?

The way to do this has changed as Python has developed. The table below shows the evolution of standard Python functions which sort and compare.

| Version | Year | Functions | Notes |

|---|---|---|---|

| 2.0 | 2000 | max, min, list.sort(cmp), bisect.bisect | Note that cmp slows the sorting process down considerably |

| 2.3 | 2003 | heapq | Also known as the priority queue algorithm |

| 2.4 | 2004 | sorted(iterable[, cmp[, key[, reverse]]]), list.sort([cmp[, key[, reverse]]]), itertools.groupby(iterable[, key) | In general, the key and reverse conversion processes are much faster than specifying an equivalent cmp function |

| 2.4 | 2004 | heapq.nlargest, heapq.nsmallest | Heapq extended |

| 2.5 | 2006 | max([key]), min([key]), heapq.nlargest(…[, key]), heapq.smallest(…[, key]) | |

| 2.6 | 2008 | heapq.merge | Heapq extended again |

| 3.0 | 2008 | sorted(iterable, key[, reverse]), list.sort(key[, reverse]) | No more cmp |

| 3.5 | 2015 | heapq.merge(…, key) | |

| 3.10 | 2021 | bisect.bisect(…, key) etc | That leaves the low-level heapq functions |

The earliest versions of Python allowed you to sort lists, and

the sort was customised using a cmp function — though the documentation

warned there would be a performance penalty[*]. The builtin min and max

functions could not be cutomised, and nor could the comparison used in

the bisect module — which is Python’s binary search implementation.

At 2.3 the heapq module appeared, but, like bisect, there was no way

to customise the ordering of heap elements.

2.4 introduced the key argument to customise ordering,

noting this should be preferred to cmp. Unlike cmp which compares two

elements, key takes a single element and returns a sort rank for that element.

The key argument was added alongside cmp in list.sort and the new sorted

builtin function; and key was the only way to customise the grouping in

itertools.groupby.

2.5 added key to min and max, and also to heapq.nlargest, heapq.nsmallest.

Although these heapq functions now accept a key, the lower level heap functions

to heapify, push, pop and replace do not.

2.6 introduced heapq.merge, a handy function to merge sorted inputs

using a heap, but with no option to specify a sort key.

3.0 got rid of cmp, making key the only way to customise

sorting. To migrate from Python 2 to 3 any cmp functions need

converting to key functions. As with Python 2.5, at 3.0 you could

apply a key to list.sort, sorted, min, max,

itertools.groupby, heapq.nlargest, heapq.nsmallest — but not to

bisect or other heapq functions.

3.5 added key to heapq.merge, aligning it with heapq.nlargest

and heapq.nsmallest, though it remained impossible to use a sort key

with the lower level heap functions.

The next change came in 3.10, when the key parameter was

added to the bisect module. As far as I can tell that means the only part of

standard Python which doesn’t let you fully customise ordering is the heapq module.

Bisect with a search key

So, to return to the original puzzle, consider our example sorted+rotated list:

>>> values = [7, 11, 13, 19, 2, 3, 5]

The first four elements are greater than or equal to the first element, 7. The last three elements are less than 7.

>>> [v < values[0] for v in values]

[False, False, False, False, True, True, True]

Since False < True the result of the list comprehension above is sorted.

Extending this idea, the following comprehension is also sorted.

>>> [(v < values[0], v) for v in values]

[(False, 7), (False, 11), (False, 13), (False, 19), (True, 2), (True, 3), (True, 5)]

Now we have a logarithmic lookup using binary search:

from bisect import bisect_left

def lookup(values, v):

key = lambda x: (x < values[0], x)

i = bisect_left(values, key(v), key=key)

return i if (i < len(values) and values[i] == v) else -1

Don’t search for v, search for its key

I was surprised to realise the value v being looked up has to have the key

function applied before calling bisect_left. That is, to find where v

should go in values to maintain the sort order, we pass key(v) to bisect_left.

This doesn’t match the interface to binary search in other languages. It also means

in our example we have to handle empty lists as a special case.

def lookup(values, v):

if not values: return -1

key = lambda x: (x < values[0], x)

i = bisect_left(values, key(v), key=key)

return i if (i < len(values) and values[i] == v) else -1

[*] Before the introduction of the sort key, the standard pattern was

decorate-sort-undecorate.

On Exactitude in Programming

Recently I attended a demonstration of a product intended to help design software, right down to implementation details. It failed to convince me. It did, however, succeed in reminding me of this short work by Jorge Luis Borges:

On Exactitude in Science

Jorge Luis Borges, Collected Fictions, translated by Andrew Hurley.

…In that Empire, the Art of Cartography attained such Perfection that the map of a single Province occupied the entirety of a City, and the map of the Empire, the entirety of a Province. In time, those Unconscionable Maps no longer satisfied, and the Cartographers Guilds struck a Map of the Empire whose size was that of the Empire, and which coincided point for point with it. The following Generations, who were not so fond of the Study of Cartography as their Forebears had been, saw that that vast Map was Useless, and not without some Pitilessness was it, that they delivered it up to the Inclemencies of Sun and Winters. In the Deserts of the West, still today, there are Tattered Ruins of that Map, inhabited by Animals and Beggars; in all the Land there is no other Relic of the Disciplines of Geography.

—Suarez Miranda, Viajes devarones prudentes, Libro IV,Cap. XLV, Lerida, 1658

and also of this tweet by @KevlinHenney:

The act of describing a program in unambiguous detail and the act of programming are one and the same.

— Kevlin Henney (@KevlinHenney) August 17, 2009

Python maths updates

A quick note on some useful updates made to standard Python maths support. Math.prod does for * what sum does for +. It was added at Python 3.8.

>>> math.prod([3, 4, 5]) 60 >>> math.prod([]) 1

Added in 3.9, math.lcm, returns the least common multiple of its integer arguments. As with math.prod, an empty list of arguments returns 1. Extended in 3.9, the related function math.gcd now accepts an arbitrary list of arguments.

For Euclidean geometry, math.hypot now supports n-dimensional points, and math.dist has been added, again working on any pair of n-dimensional points.

These useful additions and extensions are all in the standard math module (along with several others not mentioned here). Previously you’d have to roll your own or rely on e.g. numpy.

I also wanted to mention a nice extension to the power function; in this case to the builtin pow — math.pow remains as it was. Builtin pow takes an optional third parameter pow(base, exp[, mod]). If mod is specified it must be a non-zero integer, and both base and exp must also be integers. The significant but subtle change made at 3.8 is that exp can now be a negative integer, enabling modular inverse calculations.

Quoting the documentation:

… If mod is present and exp is negative, base must be relatively prime to mod. In that case, pow(inv_base, -exp, mod) is returned, where inv_base is an inverse to base modulo mod.

Here’s an example of computing an inverse for 38 modulo 97:

Fearless Debugging

Jurassic Jigsaw

My thanks to Eric Wastl for another excellent Advent of Code. I’ve now worked through all 25 puzzles, some simple, some tough, some familiar, some new; all beautifully set and highly enjoyable.

Day 20, Jurrasic Jigsaw, took me longest by far to complete. The puzzle is easy to understand. You have to assemble jigsaw pieces into a seascape. For part one, you need to find the corner pieces. For part two, you must locate monsters in the seascape.

Here, a jigsaw piece is a monochrome square, represented like so:

..##.#..#.

##..#.....

#...##..#.

####.#...#

##.##.###.

##...#.###

.#.#.#..##

..#....#..

###...#.#.

..###..###

And this is the sea monster:

#

# ## ## ###

# # # # # #

The twist was that the jigsaw pieces could be rotated and flipped over before matching them up. Once matched, the edges of the pieces were to be removed. Implementing the rotate, flip, match and remove code proved fiddly, and I was pleased to get this working and producing correct results for the supplied example.

Unfortunately my code produced the wrong answer for part two, the bit where you have to locate sea monsters. So, I would need to debug.

Fear of Debugging

Like it or not, debugging is a key part of software development.

Generally, I do like it. There’s satisfaction to be had in paring scope, retracing steps, then making the right fix, improving test coverage, and generally leaving the world a better place.

What I don’t like, though — what scares me — is the suspicion the bug lurks in the worst possible place. What if it’s in a library we don’t have the source for? Or if it’s a timing issue caused by some erratic locking? Or if there’s an issue with the compiler for the most obscure platform. Or, in this particular case, that the code to fiddle with jigsaw pieces has a bug — a bug subtle enough to pass the examples but fail the real thing.

It’s not unreasonable to fear the worst. At some point the issues mentioned above will cause bugs, bugs which are hard to fix. We need to be ready.

Fear, though, is the wrong mindset. Debugging requires you to be calm, methodical and detached. Much as an artist draws what they see and not what they know, a programmer must observe and follow the evidence. A second pair of eyes is always useful; simply explaining the issue to a colleague gives you another perspective.

Here be Monsters

I was working on my own on the Advent of Code. I could have tried explaining to a rubber duck, but didn’t. Instead I examined and traced my code. I checked and double-checked the flip, rotate, match and remove logic. I printed out intermediate state. I walked to the sea-front and back. I slept on it.

Fearing the worst turned out to be the wrong strategy.

What did work was reading the problem statement again, carefully. At last I saw it! Somehow, a rogue character had wormed its way into my inputs. The sea monster I had used looked like this:

#

# ## ## ###

# # # # # #

when it should have been:

#

# ## ## ###

# # # # # #

Since both monsters appear the same number of times in the example seascape, my test case failed to catch the bug.

So, with a single, simple, whitespace correction, my advent of code was complete. One of life’s ironies is that when you lose something you find it in the last place you look. It’s not always so with debugging: a bug can exist in many different places and sometimes the fix causes the codebase to unravel, requiring a chain of additional fixes, but, in this case, finding the bug fixed it.

A monster can exist in many different places, and in this case finding the monster fixed it

Complex numbers for planar geometry

Once again, I’m enjoying solving Eric Wastl’s excellent Advent of Code puzzles.

Today, day 12 involved a ship navigating in a 2D plane. The ship follows a series of instructions taking the form of actions and values such as F10 N3 F7 R90 F11 ..., where, for the first part:

- actions N, S, E, W step by the value in the compass directions N, S, E, W

- actions L, R turn the ship left and right by the number of degrees specified by the value

- action F advances the ship in the direction it faces by the given value

Perhaps the most obvious way to model this is by implementing a Point class, with x and y values, and suitable methods to advance and rotate.

Another way is to use complex numbers, which are natively supported by Python — and many other languages. The complex plane is a 2D plane. The point (x, y) is represented by a single complex number p = x + y * j. Stepping in any direction corresponds to addition, and rotation to multiplication.

So, the instructions N, S, E, W map to adding complex numbers j, -j, 1, -1.

Quarter turns left and right map to multiplication by j and -j.

def ship_position(instructions):

'''Return the final position of the ship after following the instructions

Instructions are a series of (action, value) pairs.

'''

ship, direction = 0, 1 # Start at the origin facing E

step = {'N':1j, 'W':-1, 'S':-1j, 'E': 1}

turn = {'R':-1j, 'L':1j}

for action, value in instructions:

if action in 'NSEW':

ship += value * step[action]

elif action in 'LR':

assert value % 90 == 0 # check rectilinear motion

direction *= turn[action] ** (value//90)

else:

assert action == 'F'

ship += value * direction

return ship

It’s also possible to implement the conditionals above arithmetically. We can think of each instruction advancing the ship (possibly by zero) and turning it (possibly by zero degrees).

def ship_position(instructions):

ship, direction = 0, 1

step = {'N':1j, 'W':-1, 'S':-1j, 'E': 1}

turn = {'R':-1j, 'L':1j}

for action, value in instructions:

ship += value * (step.get(action, 0) + (action=='F') * direction)

direction *= turn.get(action, 1) ** (value//90)

return ship

More solutions here, and thanks again to Eric Wastl.

Cryptic Message

Anyone able to help make sense of this bizarre tweet which appeared on my timeline?

The challenge is to decipher the message

— Thomas Guest (@thomasguest) June 8, 2019

Please answer using the same style so others can find their own solution. Bonus points for both quality and quantity.

Dr G’s Award Winning Puzzles

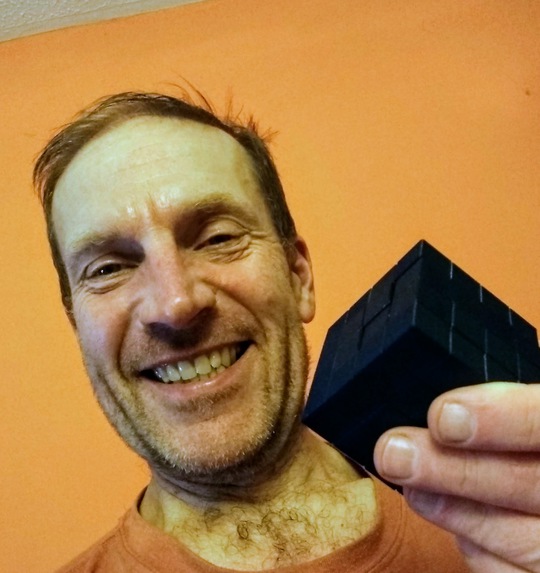

A colleague of mine shares my love of puzzles. Whilst I like solving them, he also likes to design and build them. What I hadn’t realised is that there’s a community of puzzlers out there, who hold conventions, share designs, and generally celebrate and advance the art of puzzling.

Dr G — my colleague — is part of that community and also owns a 3D printer. He works from home but whenever he visits the office there’ll be a freshly-printed puzzle for us to play with. I feel as excited as a young hobbit when Gandalf visits the Shire.

The best puzzles have just a few simple pieces. You can see how the parts must finally align but the geometry conspires to confound. There will be a sequence of twists and turns. There must be.

Casino, designed by Dr Volker Latussek, is both mould breaking and an instant classic. You have to slot 6 identical casino chips into a cubic box. The lip which makes the opening to the box rectangular rather than square is the single asymmetry which makes this task almost impossible rather than utterly trivial.

I fiddled with it, rolling the chips into position, sliding and shunting. After many minutes of manipulation I put it down, the chips loosely arranged in and on top of the box. After a lunch break I picked it up, and to my amazement the chips slid easily and directly into place.

PackTIC, designed by Andrew Crowell is a very different puzzle. The TIC stands for Turning Interlocking Cube. A bit like a 3D version of Tetris, you have to manipulate a 5 very different cubilinear pieces so they fuse into an irregular chassis. I took this one on the train with me. At the end of the journey I had figured out how the pieces would fit, but not how to assemble them. The following morning, as with the Casino puzzle, everything just clicked into place.

Aligning the first line of a triple-quoted string in Python

Python’s triple-quoted strings are a convenient syntax for strings where the contents span multiple lines. Unescaped newlines are allowed in triple-quoted strings. So, rather than write:

song = ("Happy birthday to you\n"

"Happy birthday to you\n"

"Happy birthday dear Gail\n"

"Happy birthday to you\n")

you can write:

song = """Happy birthday to you

Happy birthday to you

Happy birthday dear Gail

Happy birthday to you

"""

The only downside here is that the first line doesn’t align nicely with the lines which follow. The way around this is to embed a \newline escape sequence, meaning both backslash and newline are ignored.

song = """\

Happy birthday to you

Happy birthday to you

Happy birthday dear Gail

Happy birthday to you

"""

Python Counters @PyDiff

On Monday I gave a talk at PyDiff on the subject of Python Counters. A Counter is a specialised dict which has much in common with a set. Of course all dicts are like sets, but with Counters the resemblance is even stronger. The documentation states:

The Counter class is similar to bags or multisets in other languages.

Alongside set operations of union, intersection and is-a-subset, Counter also supports addition and subtraction — natural and unsurprising operations for a container whose job is to keep count of its elements. If you want to unite the contents of two Counters, it’s probably + you want rather than |.

Counters came to mind as a lightning talk subject since I had a go at the Advent of Code last year and used no fewer than 12 Counters in my solutions to 25 puzzles — and that total could well increase since I haven’t finished yet.

The talk itself is on github.

Metaphormers

![]()

![]()

![]()

A metaphor is a figure of speech where you describe something in terms of something else. For example, when Katy Perry sings “Baby, you’re a firework” she doesn’t mean you’re an explosive for use on bonfire night — she’s saying you’ve got a spark inside you, you should “ignite the light”, “show ‘em what you’re worth”, “shoot across the sky” and “make ‘em go, Oh, oh, oh!”.

You’re spectacular — a firework.

This post considers some metaphors for computer programming. I hope we can gain some insights into what we do by looking at it from a different angle. In other words: what are we like?

![]()

![]()

![]()

We write software. That’s not a metaphor though. It’s literally what we do. We write, edit, review and publish texts. In scrum teams we even work on stories. It’s not particularly helpful.

It’s not unhelpful either, unlike the next couple of examples, for which I blame recruitment agents.

![]()

![]()

![]()

An advert for a programming ninja isn’t meant to attract a Japanese mercenary trained in espionage and assassination, must have 2 years PHP experience — it’s meant to make a dull job sound exciting. Similarly a rockstar is unwelcome on most software teams. Creativity and even attitude may be useful. Not so the rampant ego and trantrums.

![]()

![]()

![]()

How about sports? We’re agile. We work in teams on sprints scoring (story) points. In our scrum meetings we discuss tactics.

![]()

![]()

![]()

![]()

![]()

Is coding like cooking? We assemble the right ingredients and follow tried and trusted recipes. Our products are consumed and adjusted to taste based on feedback.

![]()

![]()

![]()

![]()

![]()

![]()

![]()

Software grows organically. Tending to a codebase is a form of gardening — we nurture new features and allow them to blossom, all the while pruning dead code and trying to keep bugs under control. Release cycles are seasonal. Success depends on both weather and climate.

![]()

![]()

![]()

If Computer Science is the discipline, The Art of Computer Programming, supplies the detail, and the practice is often described as a Craft. There’s a progression, from journeyman to master, during which we learn the tools of our trade. Although end users may never see the elegant code which underpins the interface they use, we take pride in our work and like to think they can sense our attention to detail.

![]()

![]()

![]()

![]()

The most popular metaphor is construction. It’s there in our job titles: Architect, Project Manager, Engineer. We plan, assemble, build, test, deliver. Unfortunately this metaphor fails to recognise the supple, fluid nature of software — its softness.

If you’re building a house you start with foundations and then the walls go up, at which point it becomes impossible to change the foundations. If you’re building software, you could start with the wiring and then put the roof on. There’s nothing stopping you swapping the foundations at any point: running on another platform, or switching the memory manager, for example, is no more difficult than changing the user interface styling.

Or consider a software service, supported by a collaboration of microservices which are continually developed, reconfigured, replaced. That’s not construction. It’s communication.

![]()

![]()

![]()

![]()

Communication is the metaphor I’m going to champion. Code communicates, via compiler or interpreter, with the platform. It communicates with us via editor and browser. We use text, pictures, speech, gestures. We share rooms, screens, thoughts. We listen to our users. We engage with our community.

At the start of this post I dismissed “writing” as too literal. Beyond the literal and beneath the words, it’s evident we’re in the language business: not just programming languages, but also the dialect of our APIs and modules, the metaphors which describe and define our designs. Design patterns are simply shared metaphor — factory, visitor, facade — and a codebase communicates by shaping and extending its own local metaphors.

Software is the development of metaphor. We are metaphormers.

As Katy Perry would say: Oh, oh, oh!

§

The icons used on this page were downloaded from http://glyphicons.com and are licensed under the CC BY 3.0 terms.

Creating a dict of lists in Python

Suppose you have a list of objects which you want to convert into a dict mapping from some object key to the (sub-)list of objects with that key. To provide a simple example, let’s start with a list of fruits.

from collections import namedtuple

Fruit = namedtuple('Fruit', 'name colour')

def banana(): return Fruit('banana', 'yellow')

def grape(): return Fruit('grape', 'green')

def pear(): return Fruit('pear', 'green')

def strawberry(): return Fruit('strawberry', 'red')

def cherry(): return Fruit('cherry', 'red')

fruits = [

banana(), pear(), cherry(), cherry(), pear(),

grape(), banana(), grape(), cherry(), grape(),

strawberry(), pear(), grape(), cherry()]

We’d like to arrange a fruitbowl — a dict which groups fruits by colour. This can be done by creating an empty bowl, then iterating through the fruits placing each in the correct list.

fruitbowl = {}

for fruit in fruits:

fruitbowl.setdefault(fruit.colour, []).append(fruit)

Dict.setdefault is a bit of an oddity in Python, both doing something and returning a value, but it’s a convenient shorthand in this case. Despite this convenience it’s more common to use a defaultdict.

from collections import defaultdict

fruitbowl = defaultdict(list)

for fruit in fruits:

fruitbowl[fruit.colour].append(fruit)

Here’s a function to display the fruitbowl.

def print_bowl(bowl):

print('\n'.join(

'{}: {}'.format(colour,

', '.join(f.name for f in fruits))

for colour, fruits in bowl.items()))

If we call this function, we see the fruits have indeed been grouped by colour.

>>> print_bowl(fruitbowl) yellow: banana, banana green: pear, pear, grape, grape, grape, pear, grape red: cherry, cherry, cherry, strawberry, cherry

This is all fine and idiomatic Python, but whenever I see an empty dict being created followed by a loop to populate it, I wonder if a comprehension could be used.

Is there a way to declare and initialise the dict in a single expression? Here’s the best I came up with.

from operator import attrgetter

from itertools import groupby

colour = attrgetter('colour')

fruitbowl = {

col: list(fts)

for col, fts in groupby(sorted(fruits, key=colour), colour)}

Is this better than the defaultdict solution. Probably not, but it’s a technique worth remembering. Maybe the fruitbowl isn’t needed, and we actually just need to iterate through the fruits grouped by colour. For example, which colour is most popular?

>>> max(fruitbowl.items(), key=lambda kv: len(kv[1]))[0] 'green'

Using groupby, we don’t need the bowl.

>>> def grouplen(k_gp): ... return sum(1 for _ in k_gp[1]) >>> max(groupby(sorted(fruits, key=colour), colour), key=grouplen)[0] >>> 'green'

In this case, we don’t need groupby either. There is more than one way to do it.

>>> from collections import Counter

>>> Counter(map(colour, fruits)).most_common(1)

[('green', 7)]

TIMTOWTDI vs TSBO-APOO-OWTDI

TIMTOWTDI

TIMTOWTDI stands for “There is more than one way to do it”, an approach promoted by the Perl community.

The mindset behind it gets explored in more detail by the language’s creator, Larry Wall, in a talk given in 1999: “Perl, the first postmodern computer language”. He attributes the slogan to his daughter, Heidi, who says it’s a strategy which works well in her maths class; and she associates it with another saying used at school: “Tsall Good”. This doesn’t mean everything is good, or even everything has good bits. It means, overall, things are good. See the big picture.

Perl epitomises this. It’s eclectic and inclusive, supporting a variety of styles. One-liner? Fine! Like a shell script? Sure! Structured programming, object-oriented, functional? Why not! Tsall good.

I like that.

But do I feel that way about programming?

TSBO-APOO-OWTDI

A contrasting mantra appears in the Zen of Python, a list of aphorisms which summarise the guiding principles behind Python’s design. Item number 13 states “There should be one — and preferably only one — obvious way to do it.”

Perhaps realising this sounds overly prescriptive, this rule is tempered by item 14, “Although that way may not be obvious at first unless you’re Dutch.”

Guido van Rossum, Python’s BDFL — Benevolent Dictator For Life — would be the Dutch person who finds things obvious. That’s right: Dictator. Programmers don’t like being told what to do any more than two year olds. How then has Python become so popular?

Maybe emphasis falls on should. There should be only one obvious way to do it: it’s just that — Dutch or otherwise — we haven’t got there yet.

TIMTOP

For example, there is more than one Python. Obviously there’s Python 2 and Python 3, but it’s less obvious which to use. Don’t forget PyPy. Increasingly Python comes packaged with data processing and visualisation extensions, served up as a Jupyter notebook.

TIMTOPOM

There is more than one program options module.

When I started with Python there was getopt, the one and only command line handler. Coming from a C/C++ background I was quite happy to use something resembling GNU’s getopt. Then optparse appeared. Now there’s argparse. All of these libraries are readily available. Which should I use? Not optparse, that’s deprecated, unless I’m already using it and it works, that is. Regarding the other contenders, the documentation archly notes:

Users who are unfamiliar with the C

getopt()function or who would like to write less code and get better help and error messages should consider using the argparse module instead.

There are other non-standard Python options for parsing a command line too: ones which generate code from the usage notes, or by inspecting the code you want to expose.

There is more than one way to do it.

TIMTOUTF

There is more than one unit test framework. The obvious one, unittest, like getopt, draws inspiration from elsewhere — in this case Java’s Junit. Unfortunately the port is too faithful, and you’ll have to inherit from super classes etc to test something. I much prefer PyTest, which flexes the language itself to deliver test assertions as asserts.

There’s also a doctest module in the standard library which executes and checks code found in strings (hold that thought!), and there are many other non-standard testing frameworks.

There is more than one way to do it.

TIMTOWOFS

There is more than one way of formatting strings.

As we’ve seen there’s more than one Python, and libraries are always up for reinvention. This is arguably evolution rather than a multiplicity of options. That is, the most recent way to do it should be preferred.

When it comes to string formatting, though, there has always been more than one way to do it, and more ways are still being added.

Do you use 'single' or "double" quotes for a string? """Triple""" quotes. Raw strings? Raw with an r or Raw with an R? TIMTOWTDI.

What if you want to embed the value of a variable in a string? Users familiar with C’s printf() function might prefer % formatting. Fans of $shell $parameter $expansion can use template strings.

Advanced string formatting — str.format — appeared in Python 3.0, backported to Python 2.6. No doubt it has advantages over % formatting, but for me it’s a little more obscure and a little less obvious. Python 3.6 introduces f-strings which build on str.format and knock down my reservations. The syntax allows you to evaluate expressions in strings: evidently Python is heading in Perl’s direction.

APSDOTADIW

Let’s finish by returning to Perl, and to Larry Wall’s 1999 talk.

How many times have we heard the mantra that a program should do one thing and do it well?

Perl is not that program. Perl wants to do everything well. It integrates features and makes no attempt to homogenise them.

You’ve all heard the saying: If all you have is a hammer, everything starts to look like a nail.

Perl is no hammer: it has memorably been described as a Swiss army chainsaw, but Larry Wall likens it to a more conventional tool.

If all you have is duct tape, everything starts to look like a duct. Right. When’s the last time you used duct tape on a duct?

Python may aspire to offer a single obvious way to do something. It fails splendidly, being more duct tape than hammer.

I presented this blog post as a lightning talk at PyDiff a couple of days ago. The slides are here. The talk was recorded too: I appear about 24 minutes in.

DDD Wales, 2018

The first ever DDD Wales was held yesterday at TechHub Swansea. It was a free-to-attend one day event comprising 5 full one hour sessions split into 3 parallel tracks; that makes 15 sessions to choose from. Additionally, there were lightning talks in the lunch break.

Wow what a day! Time for some rest! Thank you again to all our amazing sponsors, exhibitors, Speakers, attendees and venue.

— DDD Wales (@dddcymru) March 24, 2018

See you next year #DDDWales

I enjoyed Kevin Jones’ introduction to Kotlin, the more so since it was almost entirely coded live. Kevin ably demonstrated Kotlin to be “Java without the ceremony”. I could see connections with other modern compiled languages — Swift for example — languages which aren’t feature-shy, but which aim for a light, clean syntax; languages which build on existing systems and libraries. It was interesting to see his use of the JetBrains IDE as a teaching aid, and indeed to pick up on audience thoughts on the use of IDEs to flesh out code.

Chris Cundill’s talk on “release flow” was another highlight. You may not have heard of release flow but you’ll know what it is: a tried and tested strategy for code branching. Chris used his talk to challenge and call out some more recent alternatives — Gitflow being the prime target. The session got me thinking. One dimension Chris didn’t cover was people: personalities, roles and permissions. Who can merge to which branch? Which developers work in private then push bulk updates? Git has won the version control system battle. The fight has moved into surrounding areas: branching, merging, reviewing, continuous integration, and the competition is bringing improvements in tooling and best practice.

The final talk I attended was David Carboni’s session on creating a minimal Docker container to run a microservice written in Go. David started off by explaining why simplicity matters. I agree. I couldn’t agree more. The rest of the session was, again, live coding, replaying a demo which uses the techniques described in a couple of blog posts to whittle a Docker container down from a base size of ~700MB to a scratch size ~7MB.

All in all, a great day. The split-level venue suited the three track conference well. The speakers delivered terrific sessions which the audiences engaged with. I’d like to thank the organisers, sponsors, speakers, and other attendees.

Perec @IgniteSwansea #3

At Ignite Swansea #3 I spoke about Georges Perec’s masterpiece, Life A User’s Manual.

Perec was — and indeed still is — a member of OuLiPo, a Parisian literary group interested in exploring the effects of applying mathematical patterns to text. His work seemed an appropriate subject for a presentation constrained to fit the ignite formula:

20 slides × 15 seconds = 5 minutes

It’s material I’ve spoken about before but the slides are new. The talk was recorded. I enjoyed attempting just-a-minute × 5, though on the night I could have sworn I was subject to a cruel powerpoint bug which sped up the playback.

OuLiPo fans might like to see if they can find the clinamen. The moustache is what happens in the fourth week of Movember. My thanks to all @IgniteSwansea and Cinema & Co for putting on such a great evening.